The Faster You Go, the Wronger You Get

On how AI makes it easy to confidently build the wrong thing at record speed.

I was halfway through Cat Wu’s conversation on Lenny’s podcast when I paused and wrote something down.

She’s the head of product for Claude Code, and she was describing how her team decides what to build. I kept waiting for the part where everything I know about product and engineering became obsolete. But that part never came.

What she described was product management. Know your user. Understand the problem. Cut scope. Ship, learn, adjust. Instead of revolution, I got confirmation.

AI has changed something real. When the task is simple, well-defined, clearly scoped, and has enough context around it, a model can execute it much faster than an engineer. That’s not hype. For those cases, the bottleneck really has moved.

But “those cases” is doing a lot of work here.

Somewhere along the way, the narrative expanded. “AI is changing how we build software” became “AI solved execution.” And once you believe that, the next move feels obvious: make the specs clearer, hand bigger chunks to agents, let the machine run.

LLMs, by how they work, encourage this. Give them ambiguity and they fill in the gaps confidently, often wrongly. Give them precision and they perform substantially better. The feedback is immediate: specify more, get better output. So teams specify more. Planning gets heavier. Designs get more detailed upfront. Work gets broken into larger agent-sized chunks.

Nobody sits down and says, “Let’s go back to waterfall.” But watch the behavior. LLMs can shorten feedback loops, but they can also tempt teams to skip them entirely. And the second path feels faster at first. If you believe typing code was always the bottleneck, then it feels like a no-brainer.

Yet, there’s a reason agile came to be. There’s a reason XP practitioners are so intense about small steps, tight feedback loops, and test-first development. It’s not just a process preference, but a deep claim about software work:

In complex software, you don’t discover what to build and then build it. You discover what to build by building it.

The data model looks right until you write the function. The abstraction feels clean until the second use case arrives. The coupling is invisible until two systems touch. The domain concept seems obvious until you try to name it and realize you don’t actually understand it.1

These aren’t failures. They’re the system talking.2

You don’t get that signal from a planning session alone. You don’t get it from a beautiful spec. You don’t get it from a perfectly structured prompt either. You get it by staying close to the code, moving in small increments, and letting what you learn change what you do next.

The same is true in product. The best product calls rarely arrive fully formed from a framework. They sharpen through contact with real users, real constraints, and the annoying details of implementation. You build something, watch where people struggle, notice what the system resists, and adjust. That’s where clarity comes from. Not before the work, through it.

This is the irony. For the sake of productivty, AI can make old habits feel attractive again — the very habits agile was trying to move us away from.

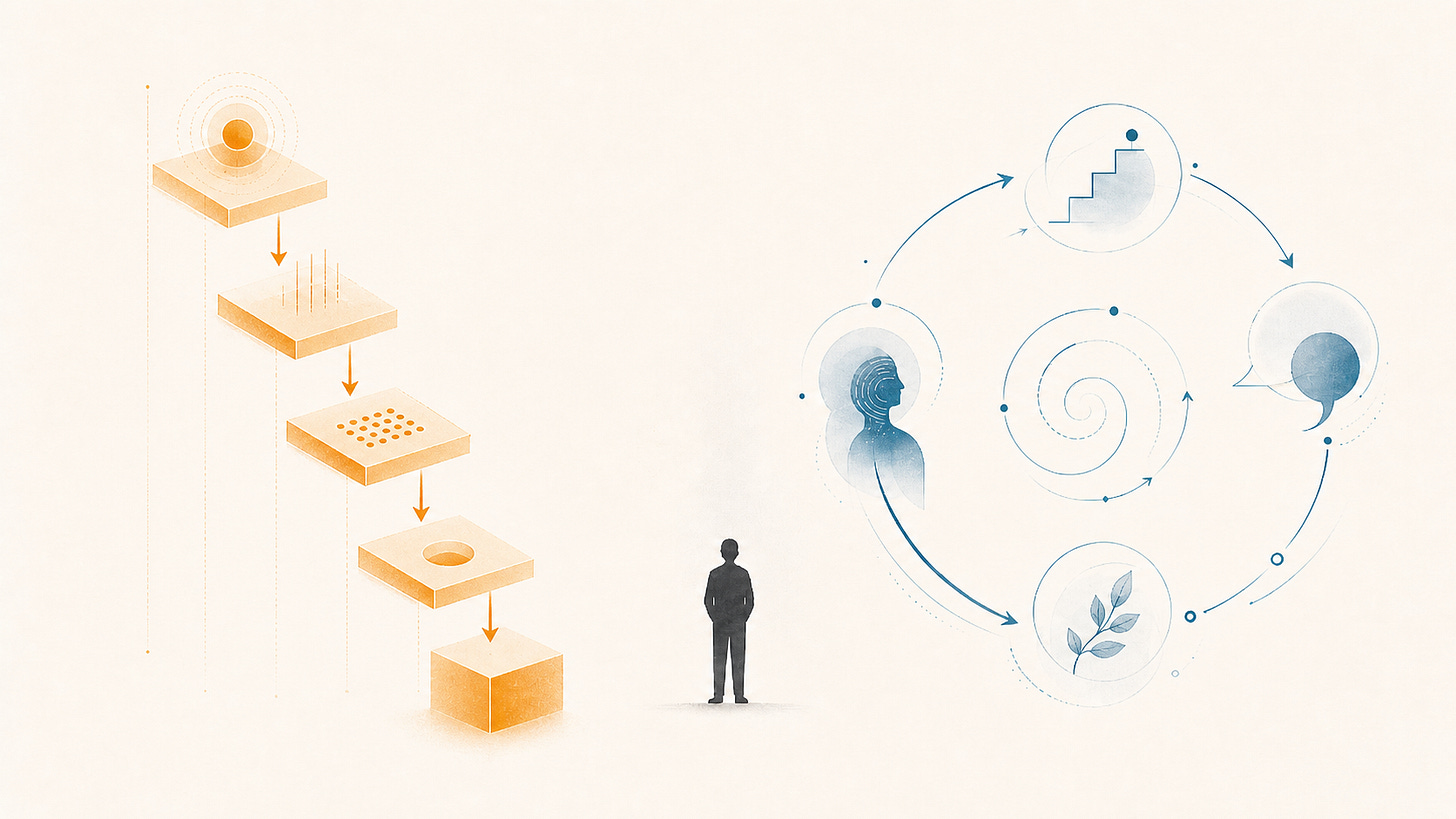

The feedback loop is easy to see:

Better prompts reward clearer intent.

Clearer intent rewards making assumptions explicit.

Making assumptions explicit can drift into upfront specification if teams are not careful.

Upfront specification can create the illusion that the unknowns have been resolved before the system pushes back.

And now the mistake can get more expensive, because you can move faster and build a lot more before reality corrects you. That’s the illusion of speed. You’re not necessarily going faster. You may just be going faster toward the wrong thing, with less ability to course-correct.

None of this means agents are useless, far from it. They’re here to stay, and the teams and individuals who ignore them will fall behind. Agents can help you spike faster, test faster, refactor faster, generate options faster, and challenge your thinking faster.

But they don’t remove the need for judgment.

They can assist with judgment-adjacent work. They can summarize tradeoffs, surface edge cases, review code, and suggest alternatives. They can even help you sharpen your own judgment. That’s valuable. But they can’t be accountable for the call.

Knowing what to build, knowing when the system is telling you something, knowing when to cut, knowing when the fast path is the wrong path — these were never just “execution.” They were always the harder parts and to pretend they’re solved by AI is questionable, to say the least.

I suspect the teams that build the best products over the next five years won’t be the ones that hand the most work to agents. They’ll be the ones that use agents to compress loops, not replace them. They’ll be the ones that use agents without losing contact with the code, the users, and the reality of their own system.

That was always the game. AI didn’t change it. Just made it a lot easier to make mistakes and not learn from them.

As mentioned in the last post, I got painfully reminded of this the hard way while building my own product using AI.

h/t to my friend Dragan Stepanović for bringing this critical aspect of programming to my attention recently in the context of agentic development.